- Blog

- Visual studio mac unity red underline

- Minecraft more player models mod 1-8

- Reimage cleaner apk patch3d

- Radford fgcu serial killer database

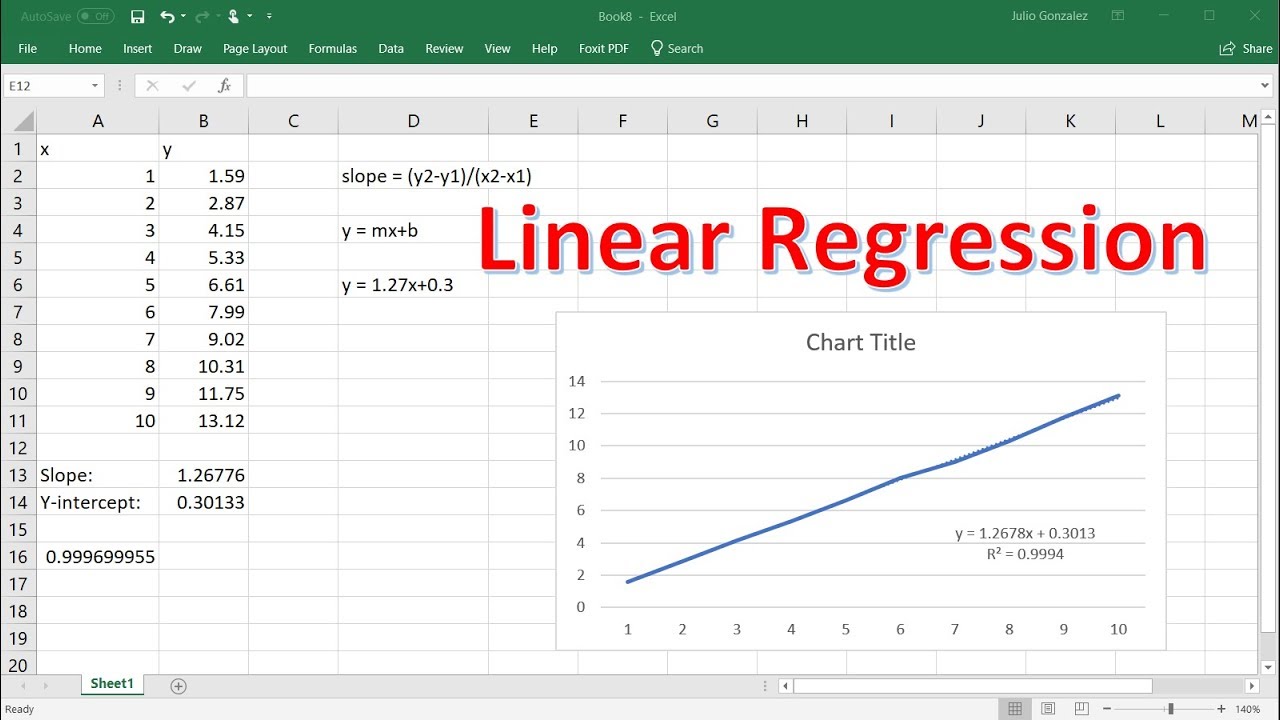

- Develop a simple linear regression equation excel ph stat

- Hunter x hunter english sub stream

- Free imovie 10 download

- Download chief architect extras

- Could not find file vita magix acid pro 8

- Download html editor for mac

- What is cyberlink youcam windows 10

- Find people fast and free

- Bajirao mastani full movie hd

- Bonecraft skidrow thepiratebay

It is calculated by taking the the ratio of the variance of all a given model’s betas divide by the variance of a single beta if it were fit alone. The Variance Inflation Factor (VIF) is a measure of collinearity among predictor variables within a multiple regression. It becomes difficult for the model to estimate the relationship between each feature and the target independently because the features tend to change in unison. The stronger the correlation, the more difficult it is to change one feature without changing another. However, when features are correlated, changes in one feature in turn shifts another feature/features.

The interpretation of a regression coefficient is that it represents the mean change in the target for each unit change in a feature when you hold all of the other features constant. Why Multicollinearity should be avoided in Linear Regression? Pair plots and heat maps help in identifying highly correlated features It is considered as disturbance in the data, if present will weaken the statistical power of the regression model. Multicollinearity refers to correlation between independent variables. Since our p-value 2.88234545e-09 0.05, we accept H0, which states that the data is normally distributed. P-value: Used to interpret the test, in this case whether the sample was drawn from a Gaussian distribution. Statistic: A quantity calculated by the test that can be interpreted in the context of the test via comparing it to critical values from the distribution of the test statistic. To test for normality in the data, we can use Anderson-Darling testĮach test will return at least two things:

If the plot trend seems to be linear, we can assume that the features would also be linear. Plotting a scatterplot with all the individual variables and the dependent variables and checking for their linear relationship is a tedious process, we can directly check for their linearity by creating a plot with the actual target variables from the dataset and the predicted ones by our linear model. Linear Regression can capture only the linear relationship hence there is an underlying assumption that there is a linear relationship between the features and the target. The score function displays the accuracy of the model which translates to how well the model can accurately predict for a new datapoint. Residual e = Observed value – Predicted Value When we apply the regression equation on the given values of data, there will be difference between original values of y and the predicted values of y. Once the linear regression model has been fitted on the data, we are trying to use the predict function to see how well the model is able to predict sales for the given marketing spends. we will try to fit a linear regression for the above dataset. Here the columns TV, Radio, Newspaper are (input/independent variables) and Sales (output/ dependent variable). Let us consider an example where we are trying to predict the sales of a company based on its marketing spends in various media like TV, Radio and Newspapers. It fails to build a good model with datasets which doesn’t satisfy the assumptions hence it becomes imperative for a good model to accommodate these assumptions. Because of this, Regression is restrictive in nature. Regression makes assumptions about the data for the purpose of analysis. Task is to find regression coefficients such that the line/equation best fits the given data. Where, y – output/target/dependent variable x – input/feature/independent variable and Beta1, Beta2 are intercept and slope of the best fit line respectively, also known as regression coefficients. The equation for uni-variate regression can be given as Regression is a statistical technique that finds a linear relationship between x (input) and y (output).

- Blog

- Visual studio mac unity red underline

- Minecraft more player models mod 1-8

- Reimage cleaner apk patch3d

- Radford fgcu serial killer database

- Develop a simple linear regression equation excel ph stat

- Hunter x hunter english sub stream

- Free imovie 10 download

- Download chief architect extras

- Could not find file vita magix acid pro 8

- Download html editor for mac

- What is cyberlink youcam windows 10

- Find people fast and free

- Bajirao mastani full movie hd

- Bonecraft skidrow thepiratebay